Disruption Edge: GPT 5.0 - Implications for AI Sentiment

High Bandwidth Memory, Cigarette Butt Investing, Critical Minerals

The Shifting Winds of AI Sentiment

Artificial intelligence dominated the headlines this week, but the narrative shifted to a more bearish tone. We believe the selloff was mainly driven by a new MIT report looking at AI deployments. The report found that 95% of corporate deployments failed, which many took to mean AI is not as useful as the market currently believes.

However we think the report held other important conclusions that were overall positive for the continued adoption of AI. To us, this report was more of a roadmap for how more corporate teams can emulate the 5% of projects that achieved millions in cost and efficiency savings, than a warning sign for AI’s lack of usefulness. Here is our main takeaways from the report.

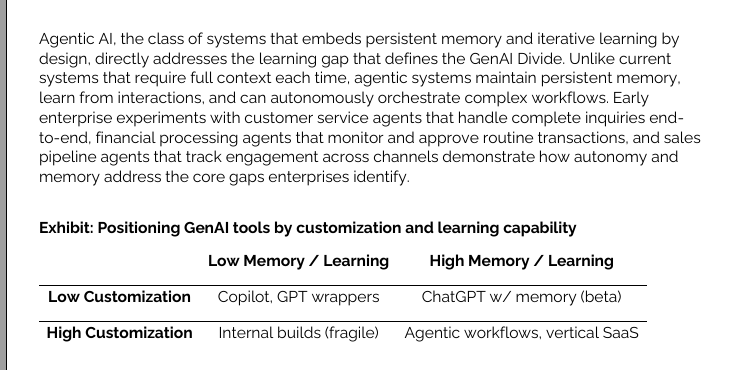

AI with persistent memory, continuous learning, and autonomous orchestration, called agentic AI is the future of AI tools.

We see AI as having two phases so far - testing and production. The testing phase, LLM training runs, is slowing in incremental improvements, while the production phase (Inference) and models that can remember the history of a project are exploding in use. Each time a model reasons it adds additional information to memory which increases the amount of memory that must be pulled by the GPU. This has the potential to drive even more demand for high bandwidth memory solutions. Right now most models are memory bandwidth constrained, not GPU constrained. What this means in practice is the GPU chips are not always running and sometimes spend more time waiting for memory than conducting computations.

Improvements in AI models have slowed significantly and most models are all the same from an intelligence perspective

This note from Semianalysis explains it best:

As models improve, they have increased in horizon lengths. What this means is that models are able to think, plan, and act for longer periods of time. This rate of increase has been exponential and has already manifested itself in superior products. Deep Research from OpenAI, for example, can think for tens of minutes at a time, while GPT-4 mustered mere tens of seconds.

As models can now think and reason over a long period of time, the pressure on memory capacity explodes as context lengths regularly exceed hundreds of thousands of tokens. Despite recent advances that have reduced the amount of KVCache generated per token, memory constraints still grow quickly.

Big Winner: High Bandwidth Memory (SK Hynix, Micron, Samsung and other private companies)

We think the rise of inference could drive much higher high bandwidth memory (HBM) demand than is currently priced into company’s like Micron. Micron’s stock performance has lagged the rest of the AI winner due to a global slowdown in traditional computer memory demand overshadowing the growth in HBM demand from AI training. However Micron is starting to outperform the semiconductor index which we think may be an indication the market is waking up to the potential of inference demand for HBM overshadowing any weakness in traditional memory sales.